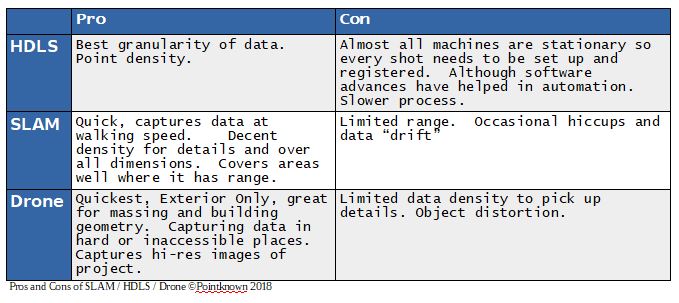

There seems to be new tech, and “reality capture” methods popping up on the daily. The lens that I look at it through though is how can any of the technologies and methods get us to a point quicker and more accurately. No, we’re not in Kansas anymore.

SLAM (simultaneous localization and mapping ) , these devices and specifically the ones developed by GeoSlam, was the first time I bought a demo and did not want Bill to leave my office with what he brought. The conversation went something like this “I’ll take it”. “Okay we can write you up and deliver….” “No I’ll take what you have in your hands right now, how much” and subsequently we bought another. As far as the combination of speed, cost and quality (granularity of data) I have found it pretty remarkable in what it does. It has its limitations though and why we augment SLAM data with other collection methods to get us to a better whole, and again; this depends on the scope of the project.

Many of you, certainly if you are reading this, are familiar with HDLS (High Definition Laser Scanning) which most people just refer to as LIDAR . Why make the distinction? Because LIDAR really is the big tent, laser scanning as a whole, and all the machines, processes are the acts. HDLS (Faro and Leica are the 2 machines I see/use most often) typically requires individual set ups, the machines stay stationary and the process while slower and more cumbersome still produces the highest density of data and can map high res photos to their results to give you an immersive experience. All of these products are line of slight so what can be “seen” in the field is what you see at your computer later. SLAM with its mobility and speed of capture can give you a fuller picture of an entire building much more economically.

Drones are using HI-Res photos and processing them through sophisticated software engines to create 3D data meshes which can be exported into standardized LAS format. Here is a link to data we created with Aerial Genomics and subsequently mapped to SLAM data. You can navigate around and view the data. Below is a screen shot.

To help explain the data density with HDLS, SLAM and Drones I’ve come up with possibly a slightly less confusing metaphor or perhaps no help at all. Steam, water and ice. With steam water in the gas phase and it’s molecules are far part (Drone Data), relatively speaking, water, liquid phase are tight (SLAM), and ice, water in solid form (HDLS) the molecules are tighter yet. With scanning the density of the points, like water molecules are tighter or farther apart based on the technology you are using and why we need to use different ones based on the scope of the project.

In the images I have included, the first one is created through HDLS using tripod mounted stationary FARO Focus. If you zoom in, you can see the handles on the cabinets, the images in the painting, etc, this is because the machine takes photos as part of the process, at least in this case) and maps them to the collected points, giving us a true 3D representation of the space, which is the back of a theater by the way.

In the second I have included both the SLAM cloud and HDLS together, you can see where the SLAM backfilled around the objects and the cabinets, giving us data to model from, the extents of walls, heights and profiles of objects, etc.

The last is solely a SLAM cloud, I can tell what and where the objects are but the cloud is just a mass, no photos, and the details/density would not allow us to determine, let’s say, the cabinet handles. Do you need definition of handles? Or in most cases of documenting existing, do you just need a volumetrically correct model that looks good in elevation and model view.

We’ve completed projects where the client wanted a 3D database of almost everything because they were retrofitting an HVAC package into an existing and very significant building. They wanted to A) document everything properly and B) be able to fabricate any objects from the data in case they were damaged during construction. This was obviously a case where we needed to create high def scans through out the entire building, in classrooms though, which did not have significant architectural detail we used our own software, PKNail, to create the geometry. It’s the ability to blend technologies and capture methods that allows a service provider to best serve the client from a detail and budgetary point of view. After you’ve completed the data collection, made sure all the data is registered to each other, even from disparate sources, that is SLAM with HDLS, with Drone captured data, etc. you’ll need to choose whether you want Revit or Autocad…but that’s an entirely different conversation, however, more times than not when using these technologies together you are creating a robust 3D data base of the building that can be revisited for any questions or even new documentation needs.

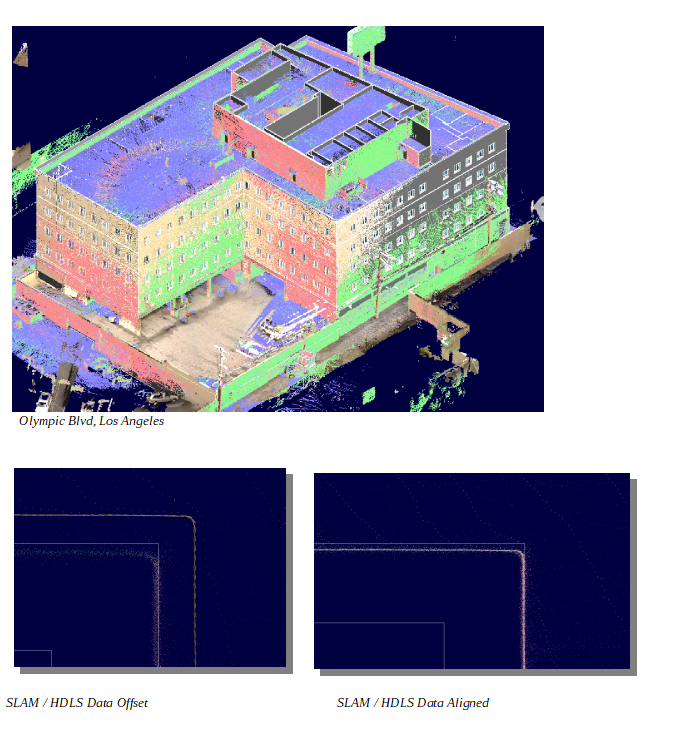

Below are some screenshots that will put most to sleep but if you’ve read this far, maybe not. The large one is of combined clouds (HDLS / SLAM) of a building we completed in LA. They were registered together, and rendered in Revit / ReCap. The first of the smaller ones is a close up of a wall with just the SLAM cloud. The second is the HDLS data overlaid the wall in Revit, the last is just the data. You’ll notice how tight the HDLS Line, with very little spread between the data points. The SLAM data looks faded in comparison and the “spread” between the farthest data point and closest is about 1/2”, that is the HDLS line looks like it was drawing with a ball point pen, the SLAM with highlighter. Experience in dealing with both sets, pro and cons can help.